Cost to Raise a Dollar Is a Terrible North Star

Nonprofits don’t drift into short-termism because fundraisers are shallow, boards are naïve, or donors are irrational. They drift because volume machine system scorecard makes short-termism feel responsible.

Conversion rate, cost per acquisition, ROAS, and cost to raise a dollar define success as whatever is immediate and attributable. Inside that world, the only decisions that look smart are the ones that win quickly. The scorecard isn’t neutral, it’s your default strategy engine.

When you reward only what you can attribute cleanly this month, you systematically underinvest in what creates next year’s demand. Then, when demand weakens, the scorecard “proves” the underinvestment was rational, because the only tactics left that still show clean returns are extraction tactics.

This is the starvation loop in dashboard form.

Why efficiency metrics quietly cap growth

Marketing science has known for decades that growth requires balancing activity to capture existing demand with activity to create tomorrow’s. Overweight direct response and you get short-term gains paired with long-term decay, because brand, memory, and mental availability are underfunded.

Nonprofits face an added constraint. Overhead aversion and efficiency ratios train donors and boards to distrust investment that does not produce immediate revenue, even when that investment funds the very capacity that makes impact possible. The result is a system that rewards superficial cleanliness while quietly shrinking the future donor pool.

Cost-to-raise-a-dollar logic feels disciplined, but it prefers tactics that look efficient in a short window, even if those tactics reduce long-term growth. Organizations respond rationally to what the scorecard rewards, which means decline is not chosen; it is measured into existence.

A scorecard that can see compounding

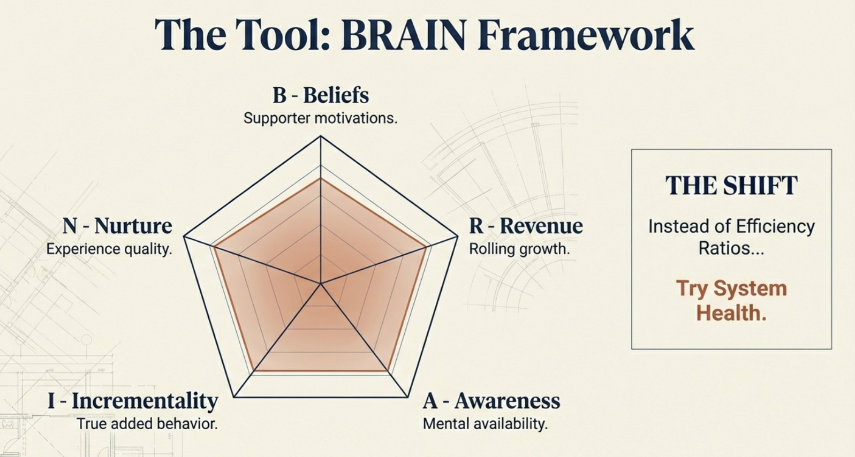

BRAIN is a full-system measurement framework designed to make long-term effects visible and defensible inside organizations otherwise pulled toward short-term proof. It is not a dashboard. It is an operating rule for growth.

BRAIN stands for:

- Beliefs: understanding why supporters give

- Revenue: growth as the non-negotiable constraint

- Awareness: mental availability and memory

- Incrementality: causal lift, not credit-claiming

- Nurture: experience, commitment, and feedback loops

The old scorecard tries to explain growth by staring backward at the last step in the journey. BRAIN measures the upstream drivers that create that step, then uses revenue growth as the verdict on whether the system is working.

If a metric says you are “doing great” while revenue and donor counts are shrinking, you are not progressing, you’re rearranging deck chairs.

Beliefs: behavior is not explanation

Most fundraising data is behavioral. Opens, clicks, gifts, recency, frequency. Behavioral data is indispensable, but incomplete. It tells you what happened, not why.

Behavior is an output, motivation is the system producing it. When organizations rely exclusively on behavior, they optimize what is easiest to move in the short term. That usually means urgency, pressure, and repetition. These tactics can generate revenue today while degrading the psychological conditions that sustain giving tomorrow.

Primary research is not optional because it measures what CRMs cannot: identity, motivation quality, perceived agency, trust, and relationship strength. Skepticism toward surveys usually reflects bad methodology, not a flaw in attitudinal data itself. Poor surveys produce noise. Well-designed ones explain behavior.

The power comes from integration. When attitudinal data is joined with behavioral data, organizations can distinguish intrinsic motivation from pressure, identify which experiences strengthen commitment, and design systems that grow value rather than extract it.

A survey that lives in a slide deck is insight theater, a survey that feeds segmentation, cadence, and message design is strategy.

Revenue: a constraint that ends self-deception

Revenue is the North Star in the BRAIN system not because it explains performance, but because it constrains interpretation.

A simple growth calibration does the work:

- Revenue Growth = last 365 days ÷ prior 365 days

- Donor Growth = unique donors last 365 days ÷ prior 365 days

> 1 growing, < 1 shrinking. Everything else lives beneath that reality.

This removes the ability to confuse activity with progress. A campaign did not “perform well” if it extracted efficiently from a shrinking base. A channel did not “work” if ROAS improved while donor counts fell.

Revenue is not what you optimize directly, it’s the constraint within which all other metrics must make sense.

Awareness is not exposure, it’s mental availability.

The probability that your organization comes to mind easily in a relevant giving context. Decades of evidence show that growth comes from increasing memory, not persuasion. Distinctive assets, recall, and recognition matter because they lower friction and change economics over time.

Incrementality exists to enforce honesty.

Attribution assigns credit, it does not establish causality. Without counterfactuals, organizations mistake volume for growth and activity for impact. Incrementality asks the only question that matters: did this create behavior that would not have happened otherwise?

Nurture measures experience and commitment, not output.

Retention is a lagging indicator. What matters upstream is whether interactions support autonomy, competence, and relatedness. If you aren’t measuring the supporter experience than you aren’t in the experience business no matter how many times you talk about it. If you do not measure supporter experience directly, you will not see deterioration until it shows up as churn, at which point it is too late to intervene.

Measurement as culture

BRAIN is not a reporting framework, it’s a discipline. Every organization already has a measurement culture, revealed by what gets celebrated and defended. If local wins earn praise, teams optimize locally. If flattering metrics substitute for system health, drift is inevitable.

The shift is complete when organizations stop asking “Did this work?” and start asking “Did this change anything?” When the scoreboard makes long-term decisions rational, short-term pathologies loosen on their own.

The purpose of measurement is not to justify what you already did. It is to make better choices inevitable.

Kevin

hi kevin,in theory this all sounds great. But in reality, the only thing you can measure are the donations that came into the organization by channel (by appeal, by list etc. etc.) and you know the investment so you know the return on investment/cost to raise a dollar. If your cost to raise a dollar for a donor appeal is more than $, you have a problem and you need to revisit your campaigns.

You can measure retention over time so you can look at short-term and long-term.

You can measure complaints and cancellations as an indicator of a problem or a trend. But also make sure you set the bar for what is an acceptable number of complaints in relation to the number reached. Sometimes people start worrying about 2 complaints after a mail or phone campaign when the agency mailed or called thousands of people.

Other than that, did this work? yes, it raised xyz dollars. When testing, did it work? yes, it increased the average gift or no it lowered the response rate, or… Did it change anything? Yes, the money raised made a difference to the constituents the nonprofit serves. I’m more inclined to focus on how can we have nonprofits focus on long-term revenue instead of short-term gains. You need to have the information to do so and leadership needs to be willing to look at that with that mindset. But it’s hard if so many nonprofits struggle to pay the ‘short-term’ bills.

Hi Erica, thank you as always for reading and for willingness to challenge. I’ll respect your push back with my own.

You’re describing the problem while treating it as the solution. “If your cost to raise a dollar is more than $1, you have a problem” — this is precisely the logic that keeps organizations small and shrinking.

If you actually believe your ROAS reports on retargeting or paid search (as an example), you should be running to the bank, taking out the biggest loan they’ll give you, dumping every dollar into more retargeting, and paying them back with massive interest every single day. But nobody does that because deep down, everyone knows those numbers are crap, they measure credit-taking, not causality.

This isn’t theory. Our clients are growing. Real revenue growth. Real donor growth. Measured over rolling 365-day periods. This stuff works. The future is here, it’s just not evenly distributed.

The sector donor base is shrinking, small charities almost never get big, many charities of all sizes are actively declining. Does this look like “best practice” is working? Or does it look like an entire sector optimizing itself into irrelevance?

You say “did this work? Yes, it raised XYZ dollars.” But if you raised XYZ dollars while your donor count dropped and next year’s pipeline got weaker, it didn’t work. You just extracted efficiently from a deteriorating base. The metrics told you that you were winning while you were actually dying.

The trap is structural. Small nonprofits start with low mental availability — fewer people think of them, remember them, or notice them when giving moments arise. So they lean into direct response because it’s the only thing that produces revenue this month. But harvesting without planting locks in the weakness. You stay small because you’re optimizing for short-term efficiency.

Meanwhile, larger organizations benefit twice. Strong brand makes every appeal more efficient, generating surplus. That surplus funds more brand investment, which increases mental availability further. Double advantage. Small organizations get double jeopardy: weak brand forces short-term tactics, and short-term tactics prevent brand growth.

The belief that brand only works when you’re already big is exactly backwards. Marketing science shows growth comes from penetration, not loyalty. Small organizations don’t grow by squeezing the same donors harder. They grow by being remembered and chosen by more people. A small increase in mental availability creates disproportionate gains when you’re starting from “rarely thought of.”

Waiting for surplus before investing in brand is waiting for a condition your current strategy makes impossible. Surplus is the outcome of brand strength, not the prerequisite.

The system needs an overhaul. Not tweaks. Not better execution of the same playbook. A different scorecard that makes long-term decisions rational instead of reckless.

You can keep measuring what’s easy and dying slowly. Or you can measure what matters and actually grow