Does Your A/B Test Have a People Problem?

“If only we could get more people to give…”

“If only we could get more people to give and do so more regularly.”

“Let’s devise a test.”

The HiPPO doesn’t hesitate.

“More people will give if we change the landing page. Less text. Or more text. Fewer fields. We should test that.”

“Great,” says someone else in the room, “We should absolutely test that. But if we’re serious about improving results, we should probably acknowledge something first.”

She pauses just long enough to register a shift.

“A lot of choices people make are tied to who they think they are. And since people aren’t all the same, we probably shouldn’t be sending them to the same page.”

A few nods. Mostly silence. Then, from the head of the table:

“Sorry,” the HiPPO says, blinking back in. “I must have dozed off. My goal is to keep things simple. And if need be, simpler than possible.”

The fail point of many tests isn’t what’s tested but further up in the logic with what’s assumed.

When you run a clean A/B test with random assignment, you’re treating the audience as interchangeable. You’re assuming whatever works for one group should work for everyone.

But people aren’t reacting to your page in a vacuum, they’re filtering it through who they are, what they value, what they notice, and what feels right to them. Hold all of that constant and you’re left testing surface features while the real drivers stay untouched.

So we test what’s easy.

- Headlines

- Images

- Button copy

- Form length

None of those are irrelevant but they’re downstream.

If two people are coming to the same page for entirely different reasons, changing the number of fields isn’t going to reconcile that. At best, you get a small lift averaged across everyone. At worst, you get noise that looks like learning.

That’s how you end up with months of testing and very little to show for it. Back to our non-HiPPO.

She went a step further and worked with a behavioral science group (rhymes with TonerChoice) to understand how their donors differ in ways that matter.

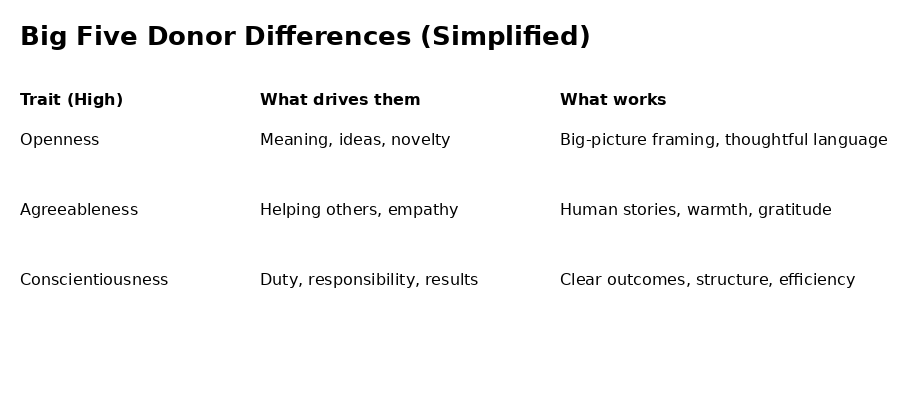

The output wasn’t complicated. One group leans into meaning and ideas. They want context, depth, a sense that what they’re doing fits into something thoughtful.

Another group is more relational. They respond to people, to stories, to a sense of helping and connection.

A third group is more task-oriented. They want clarity, structure, and evidence that what they’re doing works.

Same cause, same ask but different entry points and she had a way to identify them, too.

She put the slide up. And the room…was already checking out because this is where things get inconvenient.

If you take difference seriously, you can’t hide behind one answer anymore, you probably need more than one landing page and test within groups, not across them.

And you need a point of view on what actually drives behavior, which is harder than swapping headlines and calling it strategy. Simple is appealing because it reduces decisions.

But this version of simple that defies reality comes with a cost. It pushes all the real variation into the results, where it shows up as weak lifts, inconsistent findings, and tests that don’t replicate.

We call that noise but it isn’t noise, it’s difference we chose to ignore. The real shift is this,

Not:

“What version works best?”

But:

“For whom does this work, and why?”

This shift forces you to think differently about:

- identity

- traits

- motivation

- constraints

— Kevin